|

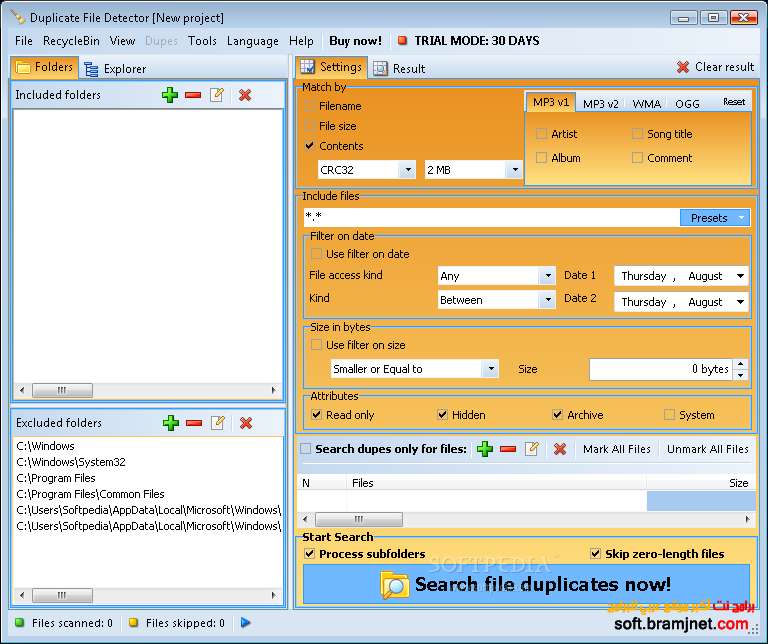

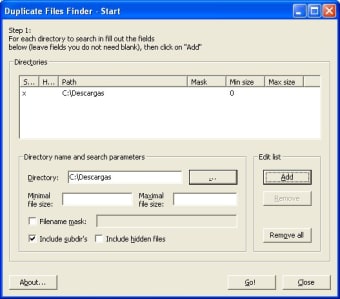

12/5/2023 0 Comments Duplicate detector applicationThus, data quality is often compromised by many factors, including data entry errors (e.g., Microsft instead of Microsoft), missing integrity constraints (e.g., allowing entries such as EmployeeAge=567), and multiple conventions for recording information (e.g., 44 W. Furthermore, the data are neither carefully controlled for quality nor defined in a consistent way across different data sources. Unfortunately, data often lack a unique, global identifier that would permit such an operation. In an error-free system with perfectly clean data, the construction of a comprehensive view of the data consists of linking -in relational terms, joining- two or more tables on their key fields. Therefore, the quality of the information (or the lack thereof) stored in the databases, can have significant cost implications to a system that relies on information to function and conduct business. Many industries and systems depend on the accuracy of databases to carry out operations. 5.3 Improving the Efficiency of Record Comparisonĭatabases play an important role in today's IT based economy.5.1 Reducing the Number of Record Comparisons.5 Improving the Efficiency of Duplicate Detection.4.3 Supervised and Semi-Supervised Learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed